To be fair, Turing closed the gap with GCN in terms of running mixed workloads (graphics/compute) with zero performance penalty.

Wolfenstein 2 runs excellent on 2080 Ti with Async Compute.

It's Maxwell/Pascal that have huge issues, something to do with the lack of hardware scheduling and thus incurring some context switch penalty (that's why nVidia recommends to disable Async on those cards).

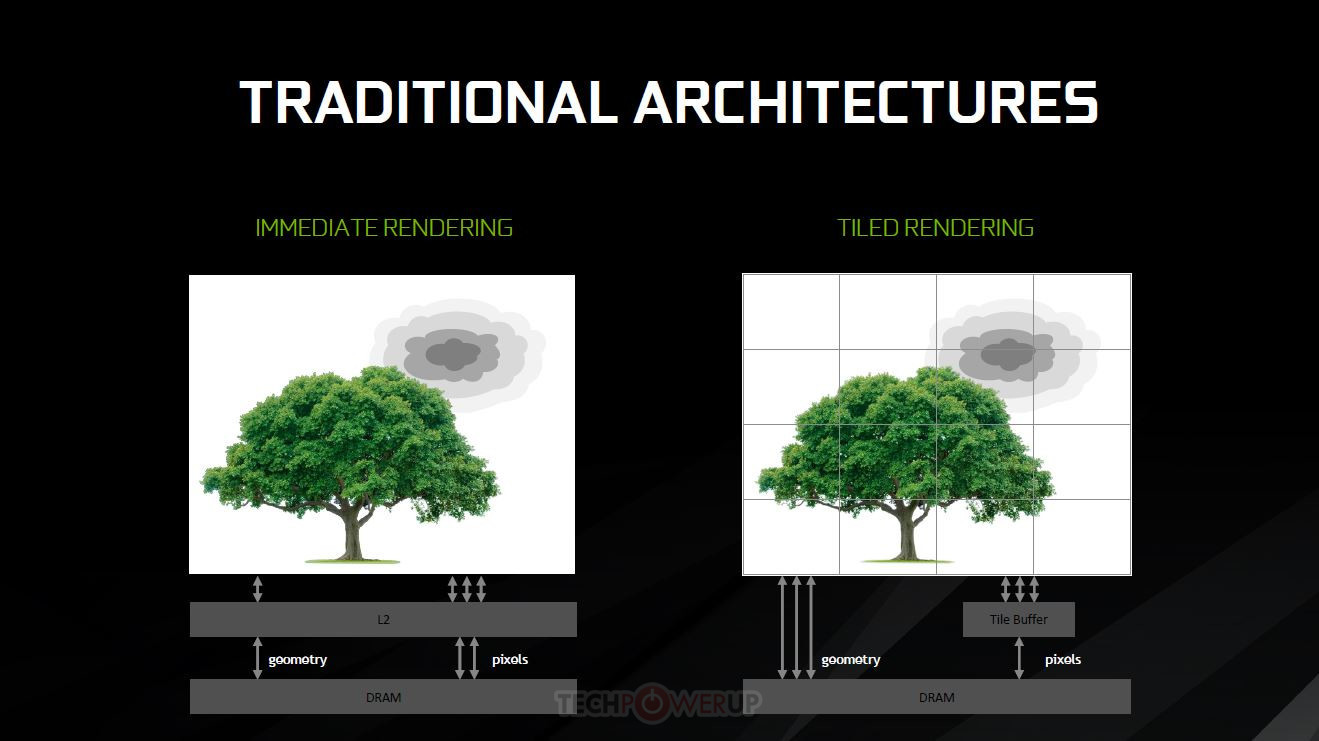

What nVidia has had for a long time is tile-based rendering and more advanced memory compression algorithms. That's where all the rasterization efficiency comes from:

Looking back on NVIDIA's GDC presentation, perhaps one of the most interesting aspects approached was the implementation of tile-based rendering on NVIDIA's post-Maxwell architectures. This has been an adaptation of typically mobile approaches to graphics rendering which keeps their specific...

www.techpowerup.com

Vega introduced DSBR and Navi vastly improved memory compression, so they're on par with nVidia.

Too bad plenty of uninformed people will keep confusing rasterization (plain old 3D graphics) with GPU compute (using the GPU to offload CPU tasks).

A flop is a flop (in terms of GPU compute, not rasterization), just like a kilogram is a kilogram. Only an uneducated person would argue than 1kg of iron is "heavier" than 1kg of cotton.

We're talking about the same people that think the GPU these days still does graphics first and foremost (spoiler alert: we don't live in the 3Dfx Voodoo era anymore, get with the times!), which is definitely not true for modern AAA games:

It's no coincidence that this game runs at 4k60 on XB1X, while there's no chance it's going to hit 4k60 on a 4TF Navi (Lockhart) and that's OK (Lockhart won't promise to deliver 4K gaming).