No need tech heads , but only devs could tell that for sure. Still most games will have options for lover res higher fps that was all point in next gen i think same as they have now on x1xAny tech heads know if 60/1080 would work?

-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Assassin's Creed Valhalla will run at 4K 30FPS on Xbox Series X

- Thread starter Agnostic2020

- Start date

Oddspeak

Member

Totally expected and entirely reasonable.

30fps is fine, and Developers, particularly big AAA blockbuster Developers, know the overwhelming majority of their audience don't want high frame rates to come at the expense of visuals.

If you care about 60fps+, pay the premium and buy a PC, because you're a minority that mass market aimed consoels will never cater to.

This is another thing people fail to grasp on this subject. A significant portion of the people who play these western, AAA, open world action-adventure games genuinely don't care about 60 FPS for them. We don't see them because they don't join forums like this and they don't follow "enthusiast" YouTubers and stuff. They're semi-casual players who want something pretty to look at and only play games to unwind.

They won't admit it, but Ubisoft knows this, and that's why they likely don't feel obligated to push for 60 on these games.

Because its better to lock on 30 if you cant reach 60, as any middle result between those makes no sense and can feel unstable. So no point to leave it unlocked.

What would be nice is to have like ray tracing on off, then you could get more fps if you turn that off lets say.

What would be nice is to have like ray tracing on off, then you could get more fps if you turn that off lets say.

Last edited:

Geki-D

Banned

And they're right for exactly the reasons you say. The whole "IT MUST BE 60FPS!!" craze is just silly. It's better, sure, but there are some types of games where 30 is ok. SP adventure games being one of them. I still think Ubi could do 60 here if they really looked into it, but 30 is far from being the end of the world.They won't admit it, but Ubisoft knows this, and that's why they likely don't feel obligated to push for 60 on these games.

I Love Rock 'n' Roll

Member

Spend 500€/$ to get full 4K and 30fps. 12 tflops powa, feel the powa of the SDD dudes! Ultimate poweeeeer! Lol.At this point it feels like MS or Sony is going to come out and say like man buy it if you want, I don't care lol.

On Pro, it's gonna be 1080p 30fps, on oneX sometimes 4k and between 25 and 30 fps.

Yeahhhhh! Noooo.

Geki-D

Banned

I imagine a lot of devs are going to use it to lighten their workload. Like despite the amount of resources it takes up, seeing as it's handling all of the reflexions and whatnot devs don't have to deal with that manually. Being able to turn it off would be like having an option to turn off dynamic lighting; the devs would have to add in pre baked shadows & lighting so that their game doesn't suddenly look like a load of crap. That adds a lot of work.What would be nice is to have like ray tracing on off, then you could get more fps if you turn that off lets say.

Games will be made on next consoles with ray-tracing as an assumed tool they must use, not as a bonus option most people don't have access to like it is now on PC.

Insert Disk Two

Member

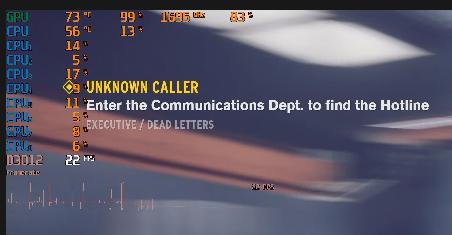

Ubisoft responded to Portuguese Eurogame that Valhalla will run at minimum 30FPS in 4K on XSX.

Xbox Series X 400 Euro price confirmed

So you teeling that those new games on pc will be without option too? Ir must be option for all those hbao+ there, so then it could go to consoles too if pc will get that. They all will be made on same port anyway is it?I imagine a lot of devs are going to use it to lighten their workload. Like despite the amount of resources it takes up, seeing as it's handling all of the reflexions and whatnot devs don't have to deal with that manually. Being able to turn it off would be like having an option to turn off dynamic lighting; the devs would have to add in pre baked shadows & lighting so that their game doesn't suddenly look like a load of crap. That adds a lot of work.

Games will be made on next consoles with ray-tracing as an assumed tool they must use, not as a bonus option most people don't have access to like it is now on PC.

Jayjayhd34

Member

Can't say I'm not disappointed but in a way I'm glad because it steers me towards upgrading my PC (or buying a spangly new one) and buying the PS5 instead of both XSX and PS5.

If affordability isn't a problem then PC/ps5 will be the best choice.

Last edited:

DunDunDunpachi

Patient MembeR

Haven't played the series in awhile, but isn't 30 fps standard for the console versions?

Drunkensamurai

Member

Can't say I'm surprised, but this is a non-issue. A lot of people that buy this game won't care about 30 vs 60 FPS.

existensmaximum

Member

I really couldn't care less about this game being 30 fps. I think it's 100% fine, and I'd rather take prettier graphics than 60 fps any day of the week

Probably not a common opinion on this forum, but wherever

Probably not a common opinion on this forum, but wherever

Dee Dah Dave

Member

Message to developers / publishers - I am not buying any forced SJW or 30fps games this coming generation

Stuart360

Member

So you are only planning on buying a couiple of games a year then?Message to developers / publishers - I am not buying any forced SJW or 30fps games this coming generation

Dee Dah Dave

Member

So you are only planning on buying a couiple of games a year then?

Yes!.....a gamepass sub and the odd full priced game is more than enough for me these days

Jayjayhd34

Member

So you are only planning on buying a couiple of games a year then?

they change the definition so much they wont have any games to play.

Majima Everywhere System

Member

Thanks Ubisoft. You always come through. I'm replacing an original Xbox One and broken PS4 Pro this winter.

Less reason to upgrade=more next gen consoles for me.

Less reason to upgrade=more next gen consoles for me.

GymWolf

Member

kinda of an hot take, but if i have to choose between not locked 60 fps with wild flactuations and 30 rock solid i think the latter is better.

i'm re-playing infamous second son on pro, and i'm using the 30 fps locked mode instead of the "60 frame but not really" mode, wild flactuations are worst than locked lower framerate for my taste (30 fps mode still has some problem but much less than 60 mode)

during this gen almost no 60 fps mode in any game was ROCK SOLID locked and it fucking shows except for gears 5 and maybe doom or halo 5.

having the framerate that does 60-59-55-57-49-52-59-60 all the time is very distracting to say at least.

thank god for having a beast pc to play 99% of games with locked 60 frames.

strangely enough no dev on console never thought about a locked 45 frame mode.

i'm re-playing infamous second son on pro, and i'm using the 30 fps locked mode instead of the "60 frame but not really" mode, wild flactuations are worst than locked lower framerate for my taste (30 fps mode still has some problem but much less than 60 mode)

during this gen almost no 60 fps mode in any game was ROCK SOLID locked and it fucking shows except for gears 5 and maybe doom or halo 5.

having the framerate that does 60-59-55-57-49-52-59-60 all the time is very distracting to say at least.

thank god for having a beast pc to play 99% of games with locked 60 frames.

strangely enough no dev on console never thought about a locked 45 frame mode.

Last edited:

Geki-D

Banned

That is a good point, and these are cross-gen games too, so the higher effort implementations are in place for the PS4/XB1 versions. Though I still think it sets a bad precedence to have the option to turn it off. Exclusives probably won't have the option, neither will games later down the line, and consoles really aren't about messing with visual effects to get the best fps or rez.So you teeling that those new games on pc will be without option too? Ir must be option for all those hbao+ there, so then it could go to consoles too if pc will get that. They all will be made on same port anyway is it?

Of course this could also be seen as a reason to point out that not making next gen exclusive games by having PC ports is actually going to cause devs to have to do extra work because they can't rely on ray tracing being a default.

Hendrick's

If only my penis was as big as my GamerScore!

I think it will be a much better ratio next gen. If you look at the Series X page here, out of the 12 games 6 are listed as 60 fps. I'm not great at math, but that's like 50% which is a huge improvement over this gen if that trend holds up.The first of a huge list of games running at 30 fps.

Every new gen is the same, people should learn the lesson already

Last edited:

OneShotThrill

Member

Like I've always said.....stop creaming your pants over specs. Its alot of marketing BS. Stop letting Papa Phil and Daddy Cerny get you guys all hyper. Let the software speak for itself

OneShotThrill

Member

I think the issue is that those titles listed aren't really pushing the graphics too hard. Honestly I don't think there will be too many AAA 60 fps games on console. The industry leads towards visuals honestly.I think it will be a much better ratio next gen. If you look at the Series X page here, out of the 12 games 6 are listed as 60 fps. I'm not great at math, but that's like 50% which is a huge improvement over this gen if that trend holds up.

I Love Rock 'n' Roll

Member

How are ps4 ports with the ps4 set has the base hardware? How is it an improvement with zen2 inside VS the results on pro and x versus the based models for most of the games this gen? Where are the 8k and 120 fps gone?I think it will be a much better ratio next gen. If you look at the Series X page here, out of the 12 games 6 are listed as 60 fps. I'm not great at math, but that's like 50% which is a huge improvement over this gen if that trend holds up.

Last edited:

DoctaThompson

Banned

You realize, that it's NOT how it works, right? LolThat is a good point, and these are cross-gen games too, so the higher effort implementations are in place for the PS4/XB1 versions. Though I still think it sets a bad precedence to have the option to turn it off. Exclusives probably won't have the option, neither will games later down the line, and consoles really aren't about messing with visual effects to get the best fps or rez.

Of course this could also be seen as a reason to point out that not making next gen exclusive games by having PC ports is actually going to cause devs to have to do extra work because they can't rely on ray tracing being a default.

Riven326

Banned

Now see, that's a reasonable response. I would also like to see an option like that for those that prefer a higher frame rate.No it doesn't, so a 1440p 60 FPS optional mode would be great.

Polygonal_Sprite

Member

Here's your windows overhead delusion.

Memory:

2gb OS windows usage

2,5gb OS xbox

CPU:

Windows uses 3% usage on a 8 core 16 thread 3700 ryzen.

Consoles lock 1 core out of the 8 away which equals 12,5% usage for OS tasks.

GPU:

Windows: 0-1% usage on PC

Consoles? probably the same

U tried.

U do realize consoles are the same these days as PC's right? Let me help you a bit

Ubisoft has to optimize for

Xbox series X

PS5

Lockheart ( if that even releases )

PS4

PS4 pro

Xbox one X

Xbox one

Xbox one S

What if old consoles are getting phased out after 2-3 years.

U will have:

Xbox series X

Xbox series X slim

Xbox series X Pro

PS5

PS5 pro

3rd party dev will look at all those boxes. much like how they look at PC.

So the PS5 is the weakest? Lets build it around there and just boost some graphical settings on the other consoles if we got time for it otherwise we just lock it either way.

WIth PC they look at what do people use right now as gpu? lets focus on that, and people can push settings higher if they have better performing hardware.

Those whole 2 cpu architectures ( which basically consoles use 1 from ) and 2 GPU architectures are some mighty hard shit to code for mate. Specially when every single engine uses them as base for everything even console games.

U didn't thought this one through did you?

PC has low level api's mate this is not the year 2000 anymore. U should google vulkan, game mode and dx12. They are all designed to mirror console space as much as possible performance wise and frankly that's exactly what it does.

Want to see the amazing 750 ti run ac odyssey, here u go. PS4 version runs at900p-1080p at anywhere from 20-30fps.

it's also almost like u can overclock the hardware while u are it, or just upgrade and get even better performance

Yet a 2080ti that has 4x the compute performance of the xbox one X can't run odyssey even at 4k and 60 fps at ultra settings without raytracing remotely. How do visual settings work. U want them to run the game at low settings straight out of the gate? or u want crisp and high quality detail with less poppins? u know where a CPU and GPU actually has to do some worth with.

I guess it's all just magical to you.

Here a really simplistic game nowhere near the detail of open world games pushing raytracing at 4k on a 2080ti which is far faster on RT then RDNA2 will ever be at 4k

However anywhere between 15 and 27 fps with huge lag spikes as the memory can't keep up.

Yet xbox series X with weaker CU's, less bandwidth, and less gpu performance will have no problem rendering complex environments with RT without effort because magic. Even shitty fake raytracing modes like from that marty guy that fake half of it can't keep a stable 30 fps at 4k on even a rtx titan. This is why RT cores are going to balloon in the next series of GPU's.

And where do you base that odyssey runs like crap on PC on? a 750ti with a potato 2 core CPu runs it at a stable 30 fps at 1080p. yea seems like badly optimized to me oh wait.

U should probably stop reading drivel from idiots and base your facts around that. It's a very very well optimized game even when at ultra settings it kills all hardware on the market. Because ultra settings on PC is mostly builded for future hardware to let it age better. And if a game doesn't offer it people will mod it.

It's clear however that u got absolutely no clue how fucking hard it is for RT to be rendered and how demanding 4k is for the GPU in those boxes.

This is why PC gamers started to laugh at the mention of 4k and RT as they know how unrealistic it is. There is no hardware in xbox series X that could push those frames forwards unless they sacrifice massively on the quality of the game and PS5 sure as hell will capitalize on it with high fps / high resolution and better draw distances. While microsoft sits there with a bit "better lightning". Good luck selling that.

U wut mate.

Its practically a PC. It uses all the parts, it uses the windows core and that's exactly what they want because of BC for the future but also for now with there own boxes but also to keep it more in sync with the PC market which they aim for heavily at this point.

The xbox series X would be a high end performing box if it released right now and focused on 1080p, but because it focuses on 4k and that's the issue here its simple weak sauce.

Let me explain.

I can buy a 2080ti right now and slam 8k on it and no game will run for shit. is the 2080ti a weak gpu? no not really, rocks everything at 1080p without issue's. But it doesn't do 1080p it does 8k, is it a weak 8k card? yea its complete dog shit. And there you go with xbox series x. Welcome to the 4k club where performance dissappears in thin air. And now u understand why PC gamers stated GPU is all that matters, luckely MS understood this and actually slammed a fast GPU in it as much as they could even while they were better off going with nvidia and slam a 3080ti in that box or a full blown rdna2 90c gpu. PS5 however yea good luck with that.

This is why PC gamers laughed at the 2080ti when it came out, RT performance was a a complete joke. People would even call it a shit card. Even while its the fastest on the market besides the titan etc.

The only one with a ego is you. 4k rt and 60 fps is simple not doable with the hardware in those boxes unless u drop the complexity massively and that's not what a game like ac odyssey represents. Maybe fighters and that's about it.

About your high end rigs.

Let me me tell u something, those consoles aren't out right now and when they do, they will be dwarved by PC hardware. Want a few examples.

PC 1080ti sits at 3,5k cuda cores at 2ghz pushes about 2080 gtx ( xbox series X performance, if we can believe DF on that front )

Next Nvidia GPU sits at 8k cuda cores.

xbox has ~52 cu's, 2080ti has 78rt cores Next rumored GPU has 256 RT cores. ( 2070 super = 52 rt cores )

AMD next flag ship GPU is rumored to have 120 cu's

Nvidia RT is heavily proven to work, amd the demo they showcased on the xbox reveal was laughable shit performing, the same for rtx minecraft.

Not to forget PC is riddled with memory far faster and more then those boxes have. actual memmory, will have dlss3.0 amd version of this are all heavily unproven

there is no comparison. And then people on PC will not move to 4k which will give them another level of performance boost. If cyberpunk runs with RT on on a 3080ti at barely 60 fps at 4k, consoles will probably struggle to run the same quality at 1080p 30 fps with RT on.

AC games are already build up from the ground up for multicore CPU's, maybe u didn't notice but PS4 and xbox all uses more cores, odyssey uses 6 cores and 12 threads on my 9900k all day long even goes as far to adress another core at times. It's a perfect fit for next gen consoles. as they will be sitting with weaker cpu's and 7 cores to adres. U act like PC doesn't exist and live in a vaccuum, yet PC is ubisofts biggest market.

And complexity increases and rip CPU performance. How does stuff work.

They could have 300x faster storage devices, doesn't change shit. Guess what storage devices are used for, fucking storage holy shit.

Anyway u tried tho.

Althoufh you were unnecessarily rude thanks for the long, well thought out reply. I’m going to read it properly later when I have more time.

DeepEnigma

Gold Member

Last edited:

Geki-D

Banned

That's how I understand it, and it seems the proof of that is out there.You realize, that it's NOT how it works, right? Lol

Stories of video game studios operating under “crunch time” — a prolonged, semipermanent period of working late nights and weekends to get games done before a deadline — are as common as they are depressing. Rockstar, the developer behind the Grand Theft Auto franchise, had developers working 60- to 80-hour weeks to meet Red Dead Redemption 2’s deadline. Epic, the company behind online megahit Fortnite, acknowledged a few cases of employees working up to 100 hours a week in what some workers consider a constant crunch lifestyle.

Much of that excessive work is spent on very minor but important details. As Tony Tamasi, senior vice president of content and technology at Nvidia, explained to OneZero, the process of getting shadows in just the right spot — called shadow mapping — can be tedious and time consuming. “It’s a pretty good amount of time developers and artists have to spend custom-tweaking things to get shadow maps to look right… And those are the kinds of things that ray-traced shadows all just magically solve for you.”

Cody Darr is an independent developer and creator of Sonic Ether’s Unbelievable Shaders, a mod that turns Minecraft’s basic block design into a detailed thing of beauty. Early versions of this mod used the old rasterization technique, but now Darr has switched to a ray-traced model. “I used to spend countless hours tweaking various faked lighting techniques to achieve the look I wanted,” Darr explains. “With ray tracing, those endless hours of tweaking to find the right balance for fake lighting effects have vanished. Everything just looks right!”

For now, most players don’t have ray tracing–capable hardware, so game studios will still need to rely on the old methods. Ironically, this will add more work to support both rasterization and ray tracing, but not much. “Yeah, it’s not free,” Tamasi says. “It’s some amount of incremental work. There’s no doubt about that. But the nice thing about ray-traced shadows is that they’re… just kind of algorithmically right.”

Further into the future, when ray tracing becomes common place, developers can start to drop older techniques in favor of these easier, faster methods. Whether that leads to less crunch time for developers is another question. “Sadly, I think it’s more of a cultural issue,” Darr says. “Technology powering game development has only gotten better, yet crunch time has only gotten worse.”

TechRadar | The source for tech buying advice

The latest technology news and reviews, covering computing, home entertainment systems, gadgets and more

Game developers have used all kinds of wild tricks over the years to simulate stuff like this. In games with mirrors, developers have created entire rooms behind the mirrors, in which a double is rendered and moves with the same inputs that our character does, just flipped, so that it looks like a reflection, but really it’s a mindless double, like some horrifying Twilight Zone horror movie nightmare.

With ray tracing, a lot of this goes away. You tell that table in the room how much light to absorb and reflect. You make the glass a reflective surface. When you move up to the window, you might be able to see your character if there’s enough backlighting. Getting up close to that vase will show you a funhouse mirror version of your face. The room lights up with one source of light, and no one had to draw those reflections or that vase’s bent shadow on the table. Instead, they’re just there, and they just work, because ray tracing takes care of all that stuff. That’s why it’s both so difficult for consumer-affordable hardware to do and so desirable for game developers. It saves a lot of time spent manually simulating things and lets the creators just create.

On the game developer side, that’s going to save tons of time. That could mean fewer game delays, because developers aren’t spending time fixing cube maps to make reflections seem real enough, tweaking lights to get a scene to light up correctly, or anything like that. There’s less to debug because instead of 10 different things simulating the effects of one light source, there’s just one lights source, and the other elements around it will get in the way of the light or bend it just by being there. It could also mean less time spent on stuff like that and more time spent on more interesting features. Instead of trying to make lighting look realistic, the lighting will just look realistic. Instead, the artist can focus on making more interesting objects in the space and more realistic textures. The layout person shift their worry off of making sure the room lights up as expected. The particle effects artists don’t have to worry about creating the effect and its reflections.

So we won’t just get flashier games out of this, we’ll get more interesting games and maybe even less buggy games. It’s a lot easier to make one magic trick work than it is to get 10 working in concert.

Last edited:

Portugeezer

Member

Aaron has some explaining to do.

DoctaThompson

Banned

That's how I understand it, and it seems the proof of that is out there.

TechRadar | The source for tech buying advice

The latest technology news and reviews, covering computing, home entertainment systems, gadgets and morewww.technobuffalo.com

Of course this could also be seen as a reason to point out that not making next gen exclusive games by having PC ports is actually going to cause devs to have to do extra work because they can't rely on ray tracing being a default.

Can you point me in the direction of a confirmation that next gen consoles will be using full raytracing on every game, and how well it performs to even come to that conclusion? Unless this is %100 true, you can bet next gen consoles will hold PC back, yet again, like every generation.

U should read the PS5 thread where they talk all day long about i/o and ssd and honestly think they get 4k RT and 60 fps in every game even while a fucking 2080ti can't get 60 fps rt at even 1080p, because the PS5 is faster then any super computer at this point haha.

It's funny as fuck.

These guys are delusional as shit.

[/QUOTE}

I can’t wait to see the face-offs from DF between the ps5,series x and 2089ti and if they show the consoles outperforming the 2080ti in some games how will the pc master-race cope.

Last edited:

Aaron has some explaining to do.

not really, he has been talking huge amount of shit since 2007/8. Biggest clown at MS. I feel sorry for anyone who takes him seriously.

Last edited:

Jayjayhd34

Member

Can you point me in the direction of a confirmation that next gen consoles will be using full raytracing on every game, and how well it performs to even come to that conclusion? Unless this is %100 true, you can bet next gen consoles will hold PC back, yet again, like every generation.

It's IMPOSSIBLE at 4k

Drunkensamurai

Member

Terrible few weeks for Ass Creed but I dought the general public will care.

Last edited:

Geki-D

Banned

Ah, I see now what triggers you. I suggested PC might be inferior. A PC Übermensch, I see.you can bet next gen consoles will hold PC back, yet again, like every generation.

Right now we know that both next gen consoles will do ray tracing right off the bat. We know that ray tracing can reduce workload. We know that the vast majority of PCs can't do ray tracing. We know that PC games will have to be made for at least the next few years without taking into account these ray tracing shortcuts.

I think it's safe to assume that the systems built with ray tracing as a default have one extra tool they can count on than the system that can't rely on that tool being available. Even if that tool isn't "full" in every game.

Last edited:

ultrazilla

Member

Haven't played the series in awhile, but isn't 30 fps standard for the console versions?

Yes. However with the bill of goods we've been sold with the Xbox Series X we've been "programmed" to think all of it's games will be 4k/60fps at a minimum.

So this is why people are pissed.

It probably *was* running at 60fps but when Ubisoft added raytracing, it probably halved the framerate.

Batiman

Banned

He can’t tell 3rd parties how to make they’re game. The game could run 60 but the devs chose to use that power elsewhere. It’s bullshit I agree. People gotta direct their frustration with Ubisoft.Aaron has some explaining to do.

Unless he said the series x mandated 60fps. Then I’m not sure.

I Love Rock 'n' Roll

Member

Now see, that's a reasonable response. I would also like to see an option like that for those that prefer a higher frame rate.

They just don't get that PS4 is the target hadware and next gen upscale FROM PS4, not the opposite. They don't get you need 8tflops with RDNA1 to upscale to 4k a PS4 game with a Jaguar CPU. They don't get that SX is mostly the double of that requiement (12 TFLOPS ANNNND RDNA2). They don't get Zen2 is about 4X more powerfull than a last gen Jaguar. They don't get it has extra fast ram + SDD. We had watch DF.

But we get that what Phil said about next and 60 FPS and it's not real... again. Do we know we want FPS other Ray Tracing?

Now, i'm sticking with my oneX and Pro.

DunDunDunpachi

Patient MembeR

Maybe when the game launches, IGN or some other corpo shill outlet will give us a next-gen version of "The Human Eye can't even see above 30fps at 4K resolutions..."Yes. However with the bill of goods we've been sold with the Xbox Series X we've been "programmed" to think all of it's games will be 4k/60fps at a minimum.

So this is why people are pissed.

It probably *was* running at 60fps but when Ubisoft added raytracing, it probably halved the framerate.

MMaRsu

Banned

I really couldn't care less about this game being 30 fps. I think it's 100% fine, and I'd rather take prettier graphics than 60 fps any day of the week

Probably not a common opinion on this forum, but wherever

So you value graphics over gameplay

And this is why many devs will target 30fps

Gamers have no standards

Jayjayhd34

Member

So you value graphics over gameplay

And this is why many devs will target 30fps

Gamers have no standards

So do you class yourself as gamer ?