-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

State of Next-Gen Graphics So Far...

- Thread starter VFXVeteran

- Start date

- Opinion Analysis Review

Tqaulity

Member

Ok, you can look at it this way and I can see why you would be disappointed. I would just point out that we have yet to see a single game built from the ground up for the next-gen console specs. Even Ratchet was a game that started development long before the PS5 specs were known. Same with games like Returnal, Horizon FW, Demon's Souls etc. Nearly every game available now is built on an engine designed for PS4/Xbox One level hardware (incl UE4) and is not very well optimized for the new gen consoles. Control was a quick port where they did nothing to take advantage of the SSD at all for example. COVID is affecting the Q/A and optimizing passes for many devs and a number of games (i.e. Deathloop, Guardians of the Galaxy and many more) did not receive the proper care to optimize for the new consoles. The specs on these machines can do A TON of things we haven't seen before in game when you consider the entire system advancements in CPU, GPU, and I/O. It sucks that it is taking so long, but we will see some jaw dropping stuff when it's all said and done. Well beyond what we are seeing now.@Feel Like I'm On 42 I understand how you feel. I also am a bit underwhelmed about what PS5 and XSX are bringing to the table, which isn't much at the moment. The new I/O subsystem + fast SSD is pretty impressive, but so far, only some sections of Demon's Souls and parts of Ratchet & Clank have looked truly next gen. Anything else is just higher res PS4 games and comparisons between PS5 and PS4 versions are pretty boring, it's just some sliders turned up, hardly anything specific to the hardware. Even then, the horsepower is a bit puzzling, even this latest Guardians of the Galaxy game has to go down to 1080p to be able to run at 60 FPS.

I feel like I just switched a graphics card rather than a new generation of consoles. I hope they bring out a PS5 Pro because this doesn't feel like enough performance to even tell PS4 and PS5 apart.

That said, I still think the performance of these console are actually over achieving when you really break down the level of performance they are achieving and how most devs haven't even begun to take advantage of them. The focus this gen is more on consistent performance (which is the right way to go) which is why DRS and reconstruction is used so often. It would be a problem if they made these "compromises" and still delivered subpar and uneven performance. But that is definitely NOT the case. But it's all perspective...you can shun at some games with lower internal resolutions but it's really the developer being wise to optimize the games to focus on consistent performance. Frankly many PC titles can do better with more DRS, reconstruction to provide consistent perf as well (many do which is why DLSS/FSR is becoming more and more common)

Given all of that, let me reiterate some numbers:

- 99% of (native) games released for PS5 and Series console have 60fps (or higher) support. I.E 60fps is now the new standard! Only a handful of outliers like The Medium for example.

- Of the titles that support 60fps on these consoles, the vast majority of them are a virtual locked 60fps where they hit their 60fps targets in the 99th percentile. This level of consistency was a foreign dream on previous gen consoles like PS4/XB1 and PS3/Xbox 360 and in many cases isn't something a PC can't match (without much higher specs to get more overhead to achieve a min of 60fps)

- Both consoles have ~20 ray traced enabled games within the first year (compared to ~5 RTX enabled games in its first year)

- There are over 20 PS5 games and over 70 Xbox Series games that support 120fps in their first year (Xbox includes the FPS boost legacy titles)

- Considering the heavy tax with RT and the fact than AMD's RDNA2 isn't great with RT at its core, we have already seen several examples of titles running with meaningful RT at a locked 60fps on consoles. Some examples include Metro Exodus, Resident Evil Village, Doom Eternal, Spiderman, Ratchet & Clank and others.

tommib

Member

We have to disagree on this one. I think the very singular style and aesthetics of Returnal will survive better than Metro's raytracing which doesn't leave any kind of impression on me - I need to be rationalising what I'm seeing in order to "get it". The pitch-black art direction of Returnal is quite the thing in my view and we've seen that artistic choices tend to survive better in time than tech code. I think Returnal will survive in the same way Quake did - they have a lot in common.While the effects and physics are clearly a step up in returnal, environmental geometric density, lighting, player character model quantity are either no better or marginally improved over last gen.

I think the ray traced global illumination on metro exodus is more "next gen" because it effects everything in the game world.

Returnal will look significantly less visually sophisticated in a few years imo.

Afterall it is an early adapted UE4 game made by a small team.

We have to remember that when games start to utilise the ssd/io setups, VRS and mesh/primitive shaders the games will get a significant visual boost.

RAW power is only one part of the equation. New and more efficient hardware features is a big part of what makes games look "next gen".

Its why games look better then remasters. The ps4 is maxed out playing a slightly enhanced bioshock infinite at 1080p 60fps but games like DOOM look vastly better because of the new hardware features of the PS4.

Tqaulity

Member

native 4K 60fps would be idiotic. But most AAA games don't target that. They use tools like DRS and image reconstruction tech to output 4K without taxing the GPU for that full workload. I keep seeing people complain about devs targeting 4K and they don't seem to realize that the vast majority are not. Dynamic 4K is just smart because you keep the load on the system low while achieving an image that is nearly indistinguishable from native 4K - win win4k 60 fps focus is idiotic.

No DLSS

Bad at RT

PS4 focused content

That's why.

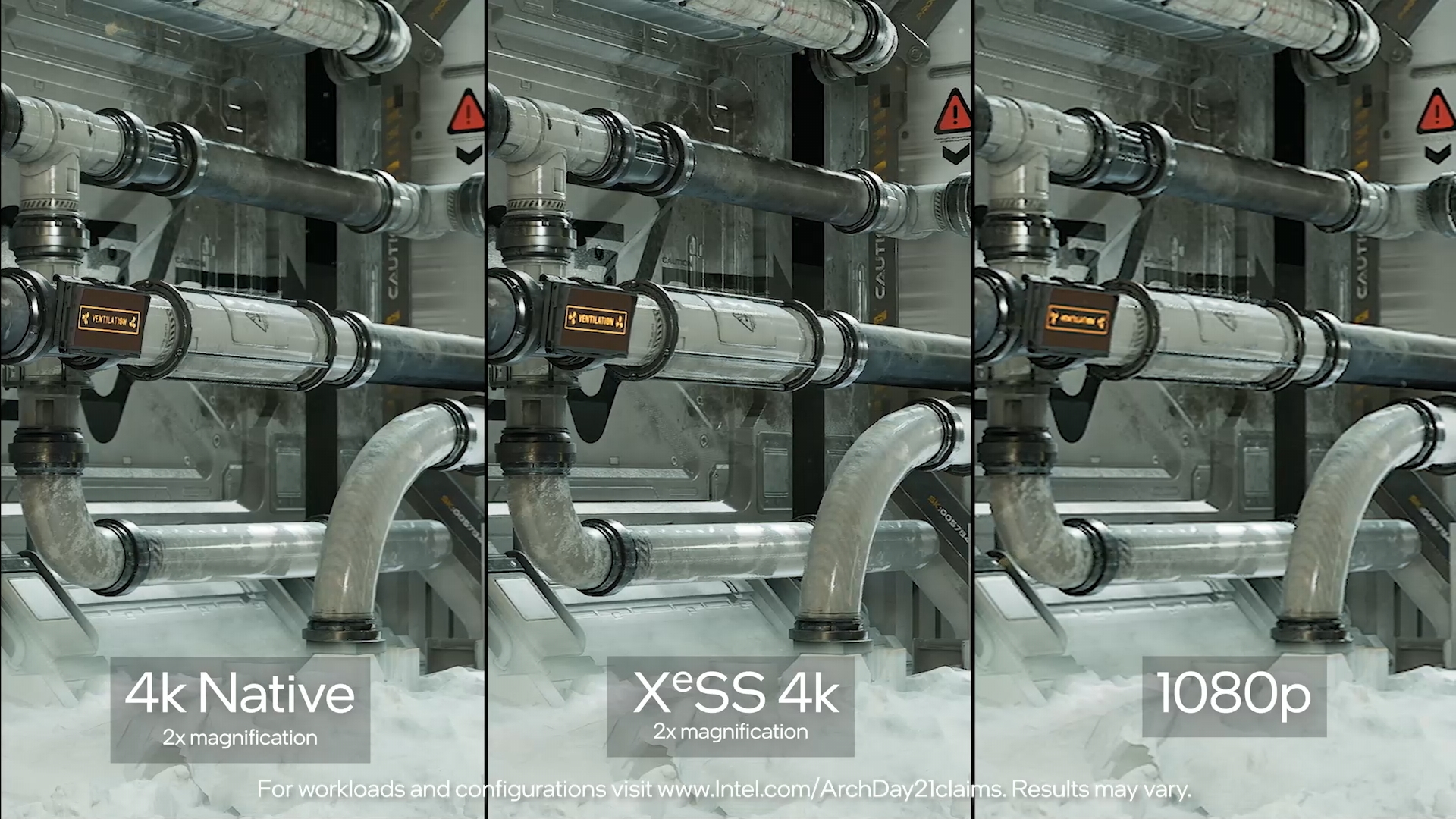

NO DLSS - again nearly every game released with a 4K target is using some form of image reconstruction to achieve that. DLSS is just one method for reconstruction and while that is not available on console, many devs have their own custom solution or just use Epic's solution if using UE4. FSR is also starting to make some rounds on console. Insomniac's reconstruction for Spider and Ratchet rival anything out there IMO and the IQ in those games is stellar despite whatever the internal resolution really is.

I've said many times that the sheer obsession with pixel counts and frame counts is just sickening to me and completely misses the whole point of what playing a game is about. Ultimately, the quality of the experience in terms of what you see and feel when playing is what should matter. The way I think about it how much of the complaining and discussion would go away if devs or media like Digital Foundry never specified a resolution for their games. Ratchet & Clank still looks stellar on a 4K screen in its performance mode. Without knowing a pixel count, how many people would have just enjoyed the game instead of refusing to play in that mode because sub-1440p just sounds low in their mind? Returnal is another classic example. Before the analysis came out and said it was a 1080p internal resolution (i.e. DLSS performance mode BTW), many people were saying based on their eyes while playing that it looked 4K to them. In fact most were shocked to hear that it was only a 1080p input. That's everything wrong with gamers today. Who the f**k cares what the number used internally in the system is. With the sophistication of the upscaling, your eyes see a great looking image so what's the problem? Should the number of pixels really matter if you can't really see it on screen? So be it...but while you will be constantly pausing your game to run up to the screen and zoom in to count pixels, I'll be just keep playing and enjoying the game

tommib

Member

native 4K 60fps would be idiotic. But most AAA games don't target that. They use tools like DRS and image reconstruction tech to output 4K without taxing the GPU for that full workload. I keep seeing people complain about devs targeting 4K and they don't seem to realize that the vast majority are not. Dynamic 4K is just smart because you keep the load on the system low while achieving an image that is nearly indistinguishable from native 4K - win win

NO DLSS - again nearly every game released with a 4K target is using some form of image reconstruction to achieve that. DLSS is just one method for reconstruction and while that is not available on console, many devs have their own custom solution or just use Epic's solution if using UE4. FSR is also starting to make some rounds on console. Insomniac's reconstruction for Spider and Ratchet rival anything out there IMO and the IQ in those games is stellar despite whatever the internal resolution really is.

I've said many times that the sheer obsession with pixel counts and frame counts is just sickening to me and completely misses the whole point of what playing a game is about. Ultimately, the quality of the experience in terms of what you see and feel when playing is what should matter. The way I think about it how much of the complaining and discussion would go away if devs or media like Digital Foundry never specified a resolution for their games. Ratchet & Clank still looks stellar on a 4K screen in its performance mode. Without knowing a pixel count, how many people would have just enjoyed the game instead of refusing to play in that mode because sub-1440p just sounds low in their mind? Returnal is another classic example. Before the analysis came out and said it was a 1080p internal resolution (i.e. DLSS performance mode BTW), many people were saying based on their eyes while playing that it looked 4K to them. In fact most were shocked to hear that it was only a 1080p input. That's everything wrong with gamers today. Who the f**k cares what the number used internally in the system is. With the sophistication of the upscaling, your eyes see a great looking image so what's the problem? Should the number of pixels really matter if you can't really see it on screen? So be it...but while you will be constantly pausing your game to run up to the screen and zoom in to count pixels, I'll be just keep playing and enjoying the game

Sosokrates

Report me if I continue to console war

We have to disagree on this one. I think the very singular style and aesthetics of Returnal will survive better than Metro's raytracing which doesn't leave any kind of impression on me - I need to be rationalising what I'm seeing in order to "get it". The pitch-black art direction of Returnal is quite the thing in my view and we've seen that artistic choices tend to survive better in time than tech code. I think Returnal will survive in the same way Quake did - they have a lot in common.

You maybe right, kinda in the same way that a game like geometry wars ages better then more complex 360 games of the time. But that is a different topic, more of an art preference, im sure there people who prefer the look of ori over returnal or metro, what im talking about is overall technical sophistication and lighting is the most important factor in modern games.

And while I think real time dynamic global illumination is more sophisticated + impactful overall then the effect + physics work of returnal, I also think in 2024 current gen games will look a lot better then both these games.

Returnal and metro are not worlds apart, but I would also lump in gears 5 (running on a seriesX) and TLOU2 running on a PS5, then it becomes a case of those games higher budget enabling more custom and curated work, we dont have the tech which can replace artists spending hours working on an environment, adding detail and identity.

Its why even with better tech some last gen games may still look better in some ways, take RDR2 for example you can have some log cabin in the middle of nowhere that is very detailed with pots + pans, burning fire, carpet, messy bed, shoes on the floor etc and some current gen exclusive with real time Gi, higher polycounts and textures but its log cabin is bare and very stock looking.

Kenpachii

Member

native 4K 60fps would be idiotic. But most AAA games don't target that. They use tools like DRS and image reconstruction tech to output 4K without taxing the GPU for that full workload. I keep seeing people complain about devs targeting 4K and they don't seem to realize that the vast majority are not. Dynamic 4K is just smart because you keep the load on the system low while achieving an image that is nearly indistinguishable from native 4K - win win

NO DLSS - again nearly every game released with a 4K target is using some form of image reconstruction to achieve that. DLSS is just one method for reconstruction and while that is not available on console, many devs have their own custom solution or just use Epic's solution if using UE4. FSR is also starting to make some rounds on console. Insomniac's reconstruction for Spider and Ratchet rival anything out there IMO and the IQ in those games is stellar despite whatever the internal resolution really is.

I've said many times that the sheer obsession with pixel counts and frame counts is just sickening to me and completely misses the whole point of what playing a game is about. Ultimately, the quality of the experience in terms of what you see and feel when playing is what should matter. The way I think about it how much of the complaining and discussion would go away if devs or media like Digital Foundry never specified a resolution for their games. Ratchet & Clank still looks stellar on a 4K screen in its performance mode. Without knowing a pixel count, how many people would have just enjoyed the game instead of refusing to play in that mode because sub-1440p just sounds low in their mind? Returnal is another classic example. Before the analysis came out and said it was a 1080p internal resolution (i.e. DLSS performance mode BTW), many people were saying based on their eyes while playing that it looked 4K to them. In fact most were shocked to hear that it was only a 1080p input. That's everything wrong with gamers today. Who the f**k cares what the number used internally in the system is. With the sophistication of the upscaling, your eyes see a great looking image so what's the problem? Should the number of pixels really matter if you can't really see it on screen? So be it...but while you will be constantly pausing your game to run up to the screen and zoom in to count pixels, I'll be just keep playing and enjoying the game

They focus on 4k and that's what i say. I am not talking about native only here.

Go look at guardian of the galaxy for example ( not like its any meaningful comparison for a lot of reasons but just as example as u see this everywhere)

"Guardians of the Galaxy PS5 in Quality Mode uses a dynamic resolution with the highest resolution found being 3840x2160 and the lowest resolution found being 2880x1620."

4k Focus, u see this in every game over additional visual settings or framerate increases. why is that? Because 4k focus. CB is great but it only works when the input resolution is already high enough or the distance from the screen is far enough because it lowers the base resolution and it shows the lower it gets.

If consoles would have had access toward DLSS and move further on it even on top of it, u should be thinking about 720p internal resolution looking like 1440p and 1080p looking like 4k and 540p looking like 1080p specially on a tv.

That's why even nintendo is busy with DLSS and intel's next gpu is having directly out of the gate DLSS 3.0 most likely, as the nvidia dlss engineers are working on it.

People just saying "dlss is just another DSR" are not seeing the point here. CB works if the resolution detail is high enough but u still sacrifice detail, DLSS will always work and even better at it which is why its needed in todays games and its why nobody on PC buys AMD gpu's anymore if it wasn't for the shortages but even then they are practically irrelevant in any metric. Intel could slam AMD out of the market if they are not improving drastically ( which they seem to be doing with there next gpu's )

Now imagine that guardians of the galaxy, 1080p 60 fps, looking like full 4k native and 60fps. with performance mode 720p, 1440p at 120fps ( if the cpu allows it ) or better just slam RT on top of it and just hold that 60 fps. or go complete nuts with next gen games by pushing visuals actually a gen forwards.

This is why i stated no DLSS as problem specially in a age where RT absolutely kills framerate and we are going to move towards it which will effect consoles even more negativity. This is why they should have never gone with AMD a simple ampere core whatever clocks would absolutely pushed them into a stable solution looking forwards, instead they got the absolute worst deal out of the GPU market that will cripple them severely for years to come.

About the 60 fps remark, while 30 fps is considered for me unplayable already for what? decade + now if not longer. I don't think consoles should focus on 60 fps to start with, its to much stress for fixed hardware and it limits visual outputs greatly. Imagine a internal resolution of 720p and 30fps on the PS5 with tensor cores. how games would look like. versus what we got now 1620p and 60 fps Huge huge leap in performance.

I think people will be for a rude awakening when next generation games come out and the performance will absolute tank on those consoles because they are simple poor designed for the future. ( another thing i mentioned on day one already ) the focus should have be on the GPU and everything else should have been secondairy. Don't get me wrong SSD is needed, but the absolute insane focus on SSD tech is just mind boggling waste of time and budget in my view.

People can count resolutions all they want, they always will be. The same goes for framerate and other things. The problem isn't the output resolution. tv tech moves forwards and they want to support it, its the way to get there and that's where they are failing hard in ( focus ). focusing on 4k is simple useless and anybody including yourself knows this. I had a 1,5k to spend on a new pc screen i could have bought everything i wanted as i got a 3080 which will boot any resolution really but i basically ended up going for 3440x1440 why? anything above 1440p absolutely annihilates gpu performance for far to small gains. the same why ultra on settings on PC is a crapshoot in some games.

And i totally agree with you on the 1080p part, i was a 1080p player with top end hardware for a long time, the only reason i moved up from 1080p to 1440p was because i wanted a bit bigger screen, if that wasn't a requirement i would still be sitting at 1080p as the ppi is only a tad bit higher on my 3440x1440 solution.

And yes most people won't be able to see differences. i tested out 1080p ultra wide resolution on this screen and frankly it looks mighty fine on the screen. It just showcases how absolute overkill all those resolutions are.

Last edited: