That's a fact. Especially when trying to push higher resolutions, the CPU becomes less and less strained, and it's the GPU that becomes pushed. That's why cpu benchmarks are done at like 720p in games.

This is about decompression, it's a whole another type of workload compared to gaming workloads on the CPU.

When you install a game, its data (geometry, textures, audio, etc) is stored in a compressed format in the storage device (HDD, NVMe SSD). Boot up the game and the data now has to get loaded into system memory, VRAM to be presented to the GPU (e.g. textures) and CPU (e.g. audio).

But before that, those data need to be decompressed from a compressed state and then converted into the GPU format (if textures) via drivers so the GPU can render them. This process of moving compressed data from a storage device to memory, then decompressing those assets isn't really an issue for modern CPUs in games built with HDD speeds (50-100 MB/s) in mind.

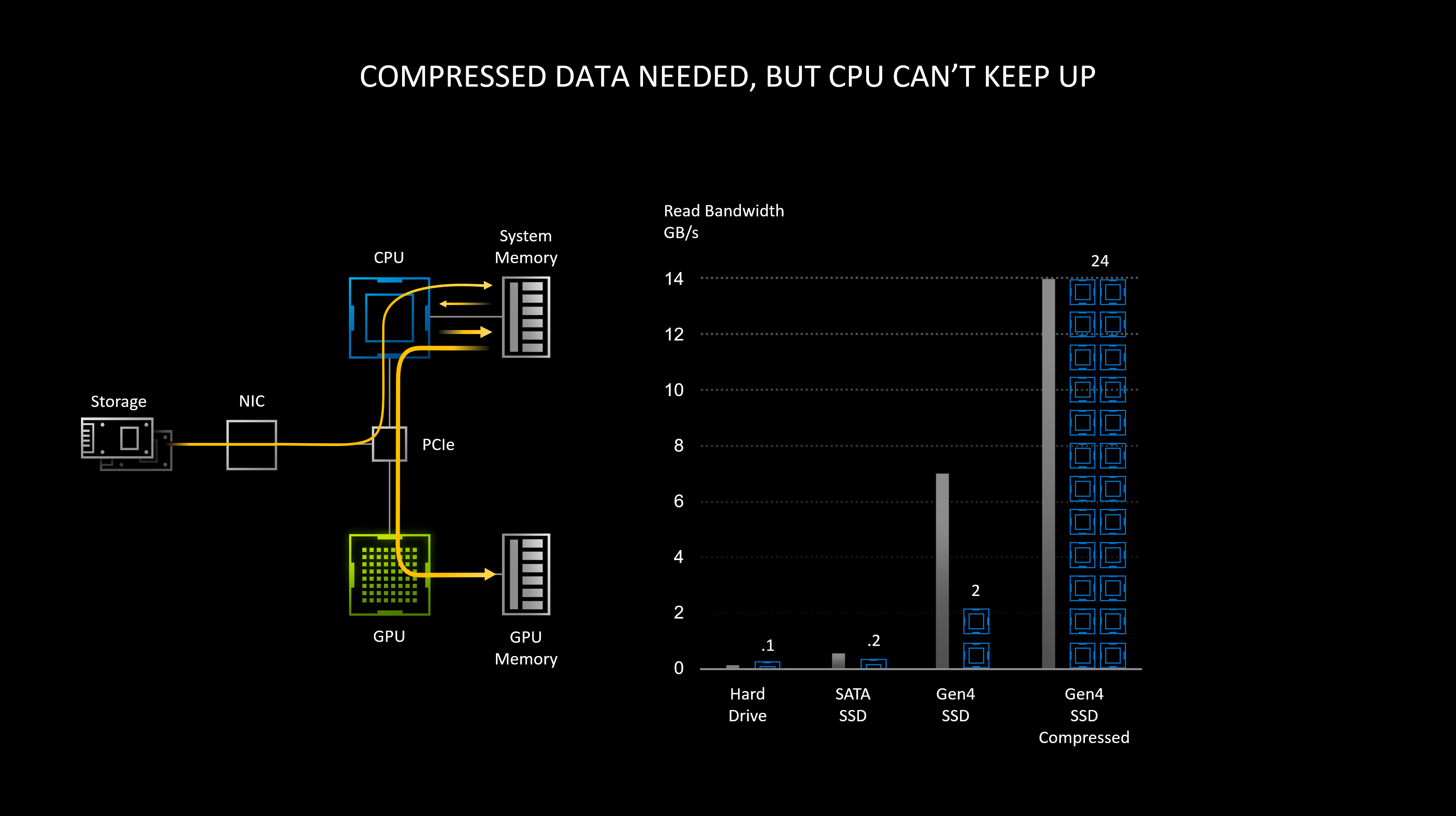

This becomes problematic and requires dozens of CPU cores when the data it has to transfer and decompress is in multi GB/s, for games that are built to take advantage of NVMe Gen3 and Gen4 speeds. This is what the UE5 demo was showcasing, btw. Behind the scenes, the engine is transferring & decompressing high-quality assets quickly from SSD to memory on the fly. PC is genuinely lacking in this department compared to even Xbox Series consoles, not just PS5. I'm not even making this up. See slides from game stack presentation by DirectStorage engineer and NVIDIA on this:

There are various other factors as well aside from CPU limitation.

There's a dedicated hardware-accelerated decompression unit in Series consoles, "no such solution for PC"

This whole presentation is worth watching as there's a wealth of info in it. He also brings up a lot of the same points (bottlenecks, etc) that Cerny was talking about in his Road to PS5.

"Compressed data needed, but CPU can't keep up"

As a temporary solution on PC for decompression instead of using the CPU, they seem to wanna fall back to GPU's SMs with RTX IO on Turing and Ampere. This doesn't seem like a good idea to me as this may have some effect on gaming perf because it is basically taking away resources from game rendering for decompression work. Of course, Jensen won't directly tell us or talk about the perf implications this is going to have, at least not now. And in the near future, we'll likely see something like "next-gen RTX IO" being marketed for next-gen PC GPUs with "Decompression Core" or something as dedicated hardware (basically what consoles have) offloading the work from SMs. This is not some wild concept. Remember this chart?

Anyway, asset decompression with Gen4 SSD speeds can take up to 24 cores with a conventional CPU (Threadripper) according to NVIDIA, 11-13 (Zen 2) cores according to Sony and MS, respectively. With just 8 cores inside consoles, you can see they can't just rely on the main CPU for asset decompression. This is why console manufacturers built a custom decompression block into the I/O unit inside the main SoC so the CPU can focus on what it's meant to do: processing the game physics, instructions, preparing draw calls, etc. without having to worry about decompression overhead that much.

This is what Tim was talking about:

You may hate him for whatever reason but I don't see anything wrong with what he's said here. It honestly lines up with what MS and NVIDIA are saying now almost a year later.