-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Reality Creation on Bravia in game like Dying Light 2 is a benediction

- Thread starter assurdum

- Start date

assurdum

Banned

Since the (double database lookup of the) X1 processor that debuted in the KD-55/65/85 X9000A TVs at the PS4 launch, Sony has been using a form of ML upscaling with their signal processing, which is semantically no different than the lag that Deep Learning Super Sampling DLSS adds, only that one is in engine, and one isn't - and the lag in the TV chip is a function of the fixed silicon/settings - so it will be interesting to see who like DLSS when lag is small but is anti ML upscaling in TVs when it is equally small.

Many people in this thread it's just about trolling and nothing more. RDR2 can be finally fixed on ps5. And many other problematic games with aggressive TAA or low resolution. In Dying Light 2 1080p without it is a no no no. If you have one of the last Bravia, it's really a good option.

Last edited:

Kuranghi

Member

No OP, in my experience I don't think Reality Creation adds an amount of input lag you need to worry about, especially with singleplayer games. That experience being testing/playing with every Sony TV model from 2017-2020 for dozens of hours each.

I told you in the Motion Flow thread you made and I'll tell you again: PM me any time with Sony TV questions instead of making a thread, I really don't mind answering them. I don't mean the thread title ofc, I mean the question about Reality Creation adding lag or how TV features work.

I told you in the Motion Flow thread you made and I'll tell you again: PM me any time with Sony TV questions instead of making a thread, I really don't mind answering them. I don't mean the thread title ofc, I mean the question about Reality Creation adding lag or how TV features work.

assurdum

Banned

I really need to ask where I'm trolling. There are a good amount of games with notable bad IQ because BC or with horrible resolution at 60 FPS. Reality Creation on last Bravia have a dedicated chip to help to fix, input lag is not too far off to DLSS implementation but still I continue to read post about lololol I preferred horrible IQ because you doh idiot you don't understand a shit lololol interpolation. Even at the primary school I get less childish attitudeI think OP is trolling us. Cant be real.

Last edited:

I’m happy you made the thread. I didn’t think of using it and that shit was unplayable in 1080p without it. Now I’m 6 hours in and loving it.I really need to ask where I'm trolling. There are a good amount of games with notable bad IQ because BC or with horrible resolution at 60 FPS. Reality Creation on last Bravia have a dedicated chip to help to fix, input lag is not too far off to DLSS implementation but still I continue to read post about lololol I preferred horrible IQ because you doh idiot you don't understand a shit lololol interpolation. Even at the primary school I get less childish attitude.

assurdum

Banned

I’m happy you made the thread. I didn’t think of using it and that shit was unplayable in 1080p without it. Now I’m 6 hours in and loving it.

My mainly purpose it was give a tips to who can't stand horrible IQ on ps5 games for whatever reason. Reality Creation on Bravia TV is really an outstanding tool. Don't know how well work without the X1 chip though.

Last edited:

coffinbirth

Member

So wait you blame me to praise Reality Creation but meanwhile you even care to post a link about a test of that intolerable input lag for Reality Creation?Wow

Google is FREE, pal.

Have you stopped to wonder why everyone keeps roasting you in these threads?

Do the goddamned research instead of all this yammering on endlessly and erroneously about established realities and for the love of bacon stop making these silly threads, ffs.

It doesn't seem as though English is your native tongue, otherwise I would question that tenuous grasp you seem to have on it.... because the result is most of what you are saying sounds like gibberish, to be frank.

Last edited:

FloopCookiefix

Member

I agree with OP and spreading the good news about Reality Creation- I use it when playing games on my PS5 all the time. I tend to keep it around 40-60, and honestly I think it's pretty amazing, especially for some lower resolution games. It's seriously like getting an actual resolution bump, without doing something straight up jank like just turning up the Sharpness setting to max.

I also personally don't notice any increased input lag, unlike when turning on interpolation processing features and certain other ones. If anything, the lag just from playing in any other picture mode than Game is significantly more noticeable. And well, thankfully, this is able to be used in Game Mode.

Instead of instantly dismissing/mocking, if you have the feature on your Bravia TV then just... try it for yourself? If you notice more lag, then by all means turn it off and move on with your life, but you might find it to be a really cool feature

I also personally don't notice any increased input lag, unlike when turning on interpolation processing features and certain other ones. If anything, the lag just from playing in any other picture mode than Game is significantly more noticeable. And well, thankfully, this is able to be used in Game Mode.

Instead of instantly dismissing/mocking, if you have the feature on your Bravia TV then just... try it for yourself? If you notice more lag, then by all means turn it off and move on with your life, but you might find it to be a really cool feature

assurdum

Banned

Dude you are free to ignore the thread indeed to complain about his stupidity as many others there if they are here just to mock me. So I can't even complain if someone troll the whole time adding nothing to the discussion, are you fucking serious? You are even pissed off if ask to you to post some link about the entity of the input lag, when even on DLSS give such issue, I think it's normal to ask to have more data, it's the point of thread.

Google is FREE, pal.

Have you stopped to wonder why everyone keeps roasting you in these threads?

Do the goddamned research instead of all this yammering on endlessly and erroneously about established realities and for the love of bacon stop making these silly threads, ffs.

It doesn't seem as though English is your native tongue, otherwise I would question that tenuous grasp you seem to have on it.... because the result is most of what you are saying sounds like gibberish, to be frank.

You can't even tolerate my english lol. Get a life outside of the forum if you can't stand some discussion there, what the hell you want

Last edited:

01011001

Banned

what is the added input lag on this? Sony TVs already are not the best when it comes to lag and I would avoid them for gaming... this can not be a good way to play games.

also I see people mentioning DLSS having input lag??? wtf? first of all, no, it has basically little to no added input lag and secondly DLSS will actually run the game way faster which instantly mitigates that issue completely if it was there.

as soon as you use DLSS in your game it will run at a higher framerate and your latency WILL GO DOWN... that is true across the board

what you are doing on that TV is running the game at the same framerate but make it look amoother + adding lag on top of that. not even remotely comparable in any way

edit: so the only number I can find online for this is a 1 yo post where people suggest that on the newest TV back then it added about 30ms of lag... that is atrocious!

this is not 30ms total mind you, that is about 20ms to 25ms for the normal lag you have on a typical Bravia + another 30ms of lag.

but that wasn't a concrete test I found just some TV enthusiasts talking about it online.

also I see people mentioning DLSS having input lag??? wtf? first of all, no, it has basically little to no added input lag and secondly DLSS will actually run the game way faster which instantly mitigates that issue completely if it was there.

as soon as you use DLSS in your game it will run at a higher framerate and your latency WILL GO DOWN... that is true across the board

what you are doing on that TV is running the game at the same framerate but make it look amoother + adding lag on top of that. not even remotely comparable in any way

edit: so the only number I can find online for this is a 1 yo post where people suggest that on the newest TV back then it added about 30ms of lag... that is atrocious!

this is not 30ms total mind you, that is about 20ms to 25ms for the normal lag you have on a typical Bravia + another 30ms of lag.

but that wasn't a concrete test I found just some TV enthusiasts talking about it online.

Last edited:

TGO

Hype Train conductor. Works harder than it steams.

Sorry guys....I'm guilty of this too....

But It does improve the image quality, especially on games with softer outputs and it's not the same as sharpening, there is no over sharpening effect.

although I wouldn't put as high as the OP, Automatic should be enough.

I can't say I've noticed the lag with MS sensitive games like VFV and Street Fighter V so it can't be much and I mostly play slower paced games anyway.

Not saying there isn't lag I'm sure there is but if you can't notice it, who cares?

But I understand peoples reaction.

reaction.

It should be a big no no.

But It does improve the image quality, especially on games with softer outputs and it's not the same as sharpening, there is no over sharpening effect.

although I wouldn't put as high as the OP, Automatic should be enough.

I can't say I've noticed the lag with MS sensitive games like VFV and Street Fighter V so it can't be much and I mostly play slower paced games anyway.

Not saying there isn't lag I'm sure there is but if you can't notice it, who cares?

But I understand peoples

It should be a big no no.

assurdum

Banned

I noticed the lag when many others started to blame this thread (honestly I wouldn't even noticed it without a straight comparison and paying attention) but I have to say it's very minimal and in game like Dying Light 2 is preferably all the life than the muddy IQ it gives at 1080p. It's a fucking nightmare to play it in a 4k screen. Yes there is the resolution mode but here input lag of 30 FPS is definitely more noticeable than the Reality Creation one. Said that, why with DLSS no one put the same energy to lol about it as they did for the input lag of Bravia TV upscaling? ML upscaling cause it in all the implementation.Sorry guys....I'm guilty of this too....

But It does improve the image quality, especially on games with softer outputs and it's not the same as sharpening, there is no over sharpening effect.

although I wouldn't put as high as the OP, Automatic should be enough.

I can't say I've noticed the lag with MS sensitive games like VFV and Street Fighter V so it can't be much and I mostly play slower paced games anyway.

Not saying there isn't lag I'm sure there is but if you can't notice it, who cares?

But I understand peoplesreaction.

It should be a big no no.

Last edited:

coffinbirth

Member

You asked me a question, and I answered. It's not my problem if you don't like the answer.Dude you are free to ignore the thread indeed to complain about his stupidity as many others there if they are here just to mock me. So I can't even complain if someone troll the whole time adding nothing to the discussion, are you fucking serious? You are even pissed off if ask to you to post some link about the entity of the input lag, when even on DLSS give such issue, I think it's normal to ask to have more data, it's the point of thread.

You can't even tolerate my english lol. Get a life outside of the forum if you can't stand some discussion there, what the hell you want

Trolling=/=pointing out the obvious. See above.

I added everything I needed to this "discussion" in my first post, you've just decided to try to argue without doing any research on the topic.

If you can't notice literal frames being off, I don't know what else to tell you...and perhaps pointing this out to someone with a proven track record of playing console games on a television that is not running in proper game mode with a wireless controller is my mistake to begin with, but regardless, I'm not telling you how to play your games, I just said the lag was unacceptable to me. Also worth pointing out that Bravia TV's by default have and av sync on that introduce a ton of lag as well.

If English isn't your 1st language, I apologize...but it's pretty hard to understand you. That has nothing to do with my life, which is pretty great, lol.

assurdum

Banned

Dude if you can't stand the input lag in Bravia TV it's your problem, not mine, I don't see the reasons to make a whole post about the stupidity of the thread when I gently ask to you a concrete data of the entity of the input lag of Reality Creation just to compare it to the Nvidia DLSS because it's not even that easily perceivable. I don't like your tone in the previous post, not your answer.You asked me a question, and I answered. It's not my problem if you don't like the answer.

Trolling=/=pointing out the obvious. See above.

I added everything I needed to this "discussion" in my first post, you've just decided to try to argue without doing any research on the topic.

If you can't notice literal frames being off, I don't know what else to tell you...and perhaps pointing this out to someone with a proven track record of playing console games on a television that is not running in proper game mode with a wireless controller is my mistake to begin with, but regardless, I'm not telling you how to play your games, I just said the lag was unacceptable to me. Also worth pointing out that Bravia TV's by default have and av sync on that introduce a ton of lag as well.

If English isn't your 1st language, I apologize...but it's pretty hard to understand you. That has nothing to do with my life, which is pretty great, lol.

Last edited:

01011001

Banned

Said that, why with DLSS no one put the same energy to lol about it as they did for the input lag of Bravia TV upscaling? ML upscaling cause it in all the implementation.

because DLSS adds AT MOST maybe 1ms or 2ms of lag while also making games run faster which in return REDUCES lag.

if you play a game that has 60ms of engine latency at 60fps, and then play the same game at 60fps but with DLSS on, you will have 61ms or 62ms of lag instead of the 60ms. that is almost not noticeable to any degree.

but that is not the typical use case of DLSS is it? no, the typical use case of DLSS is to get a better framerate out of your system by running the game at a lower internal resolution. which in return means that the latency of your game WILL GO DOWN not up while using DLSS.

even the tiny added latency wouldn't be an issue, but now that latency is completely countered by the faster framerate

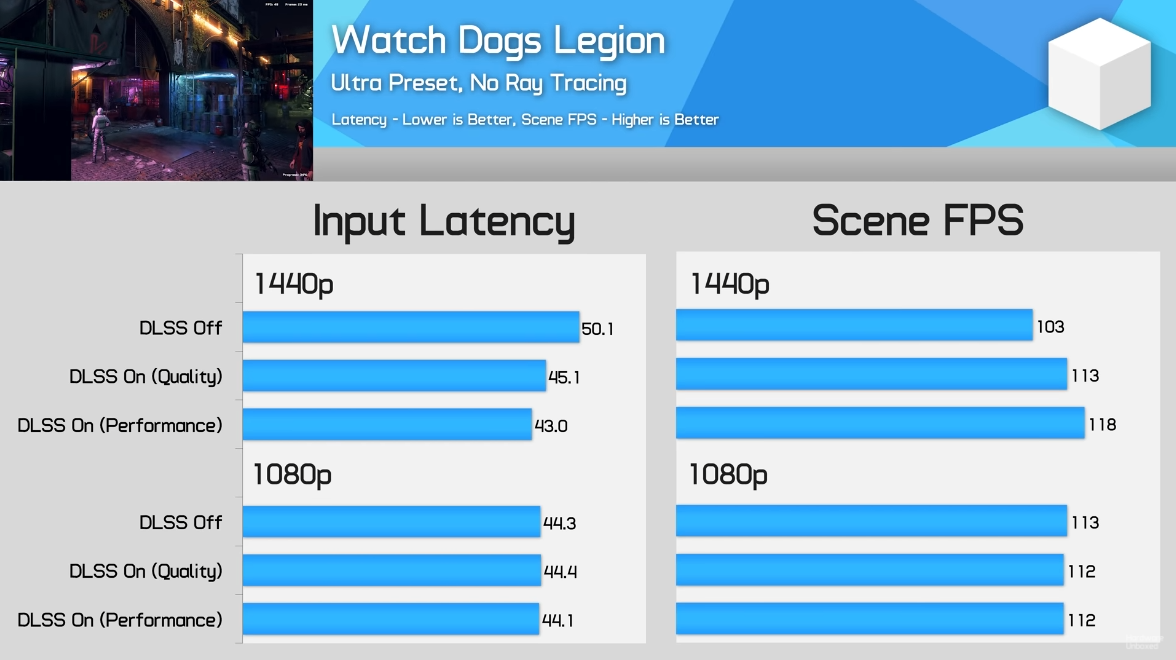

here is a chart of Watch_Dogs Legion using DLSS. notice the CPU bound 1080p test here where DLSS runs at basically the same framerate and with almost identical input latency compared to the native render version. we are talking differences in the 0.1 ro 0.2 ms range here!

(and these are tests performed by Hardware Unboxed, which are often called AMD fanboys btw, which is of course not true but just saying. they aren't known to shill for Nvidia or anything of the kind)

the framerate improvement or differences in the 1440p tests correspond almost 100% with the latency reduction. meaning, this basically tells us that DLSS does not add any real input lag to the game. if it adds latency it is such a tiny amount that it would fall into the realm of expected variance during measurement

comparing this latency that may or may not be added, which is hard to tell because the differences are so tiny in measurements, is not even remotely comparable to a worse image enhancer adding 30!!!! milliseconds of latency to the game. 30ms is already bad as a baseline for any modern TV where sub 20ms of lag is expected now, but it adds those 30ms on top of the already existing latency of the TV.

Last edited:

Phunkydiabetic

Banned

Tried already with RDR2 and Far Cry 6: Reality Creation setting manually at the max it's almost transformative in such games with very aggressive TAA and blurred IQ. Unfortunately super resolution work very awful with most CBR solutions (strangely not with RDR2) but to play Dying Light 2 at 60 FPS I really suggest to use it to eliminate the annoying hazed vegetation. I start to really appreciate my Bravia after the first bad impression of the HDR tone compared the LG, at least for stuff like this.

I stand in solidarity with you my friend. Reality Creation works wonders, if set too high it can introduce artifacts but when left on auto or dialed in properly it's

Hollowpoint5557

A Fucking Idiot

So for the hell of it I went and tried it on Battlefield 4 which is notorious for its blurry low resolution and I was shocked by the results. NO perceptible lag added and the image was so much better.

Bulletbrain

Member

Sharpening is almost a requirement these days with the abundant use of TAA. I always add a tiny amount of sharpening via NVIDIA control panel. Usual TV sharpness however does not look good, but I guess the Bravia tech is a good compromise, especially for those who aren't so input lag sensitive.

Last edited:

thebigmanjosh

Member

Agreed. There’s a negligible hit to input lag and does an incredible job upscaling old content especially on the newer TVs.Unlike the interpolation thread, I agree with OP. Bravia upsampling is really good.

I was just clearing up the DLC trophies on the PS4 version of Control and used the creation engine and it cleaned up the image a lot, actually made the textures look a lot better.

On my A90J 4k native content with the AI/RC upscaling looks super sampled without over-sharpening the picture.

01011001

Banned

Agreed. There’s a negligible hit to input lag and does an incredible job upscaling old content especially on the newer TVs.

On my A90J 4k native content with the AI/RC upscaling looks super sampled without over-sharpening the picture.

So for the hell of it I went and tried it on Battlefield 4 which is notorious for its blurry low resolution and I was shocked by the results. NO perceptible lag added and the image was so much better.

the only number I can find online from about a year ago is 30ms and someone from 4 months ago saying it adds noticable lag at anything above setting it to 0... and if that is accurate then that is not negligible at all.

are there any credible sources where the latency is measured?

because I highly doubt that this has no noticeable lag.

Last edited:

JackSparr0w

Banned

I feel sorry for console gamers if only they knew what a PC tool like Reshade can do.

Kupfer

Member

Tried already with RDR2 and Far Cry 6: Reality Creation setting manually at the max it's almost transformative in such games with very aggressive TAA and blurred IQ. Unfortunately super resolution work very awful with most CBR solutions (strangely not with RDR2) but to play Dying Light 2 at 60 FPS I really suggest to use it to eliminate the annoying hazed vegetation. I start to really appreciate my Bravia after the first bad impression of the HDR tone compared the LG, at least for stuff like this.

Hard to imagine, can you give us some examples?

Kuranghi

Member

the only number I can find online from about a year ago is 30ms and someone from 4 months ago saying it adds noticable lag at anything above setting it to 0... and if that is accurate then that is not negligible at all.

are there any credible sources where the latency is measured?

because I highly doubt that this has no noticeable lag.

There isn't any properly measured data anywhere from what I've looked over the years I only have my own and others anecdotal experience with it. Like now I can turn it on and off manually while doing mouse circles on the desktop and theres no way in hell 30ms is being added, that would be adding nearly 4 more frames of lag at 60hz (desktop is at 60hz) on top of whatever there already is from source to display.

If I switch from triple buffered v-sync to double-buffered v-sync in a game running at 60hz, thats a definitely noticeable increase in input lag for me. Thats adding 16.66ms afaik so theres no way I wouldn't notice a 30ms increase on the desktop. So I do think it adds some amount of lag but its very small and definitely not something most players would notice or an amount I would care about even if I did use it.

People in here saying SFV/FGs aren't noticeably affected by using is more evidence its not something to worry about. I think if it mattered much then enthusiasts would be measuring it and telling us as another metric to make TVs compete, just like with comparing PC components, its all about the drama and video thumbnails for many.

Kuranghi

Member

Nvm, I was wrong, I thought X900h has no RC.

It has no Smooth Gradation, is that what you were thinking of? Which is why I said don't buy X900H, its "X1" isn't even as good as the one in the X850E/X900E from 2017 due to lacking that feature. It has no super bit mapping for improving gradients and its gradient handling in general is reportedly worse than the previous X1 chip TVs.

TheGodfather07

Member

Op I ain't going to shit on you like most others. I think people are mistaking this for motion smoothing, which adds input delay. Reality creation doesn't seem to effect the input at all and it does a good job of making games look clearer. It's especially good with older games. Really clears those blurry backgrounds.

StateofMajora

Banned

You are wrong. Turning on reality creation in game mode (it’s on by default in game mode btw) does not add additional lag.Yes.

And they stack the more of these "enhancements" you use.

THIS WAS ALREADY COVERED IN YOUR PREVIOUS THREAD.

Whilst you are in game mode, the only setting that adds additional input lag on Sony bravia modern sets is black frame insertion specifically on oled tvs. The setting is under motion settings and is listed as “clearness”.

Source : Myself with many different Sony tvs and leo bodnar lag tester tool.

Last edited:

assurdum

Banned

I tried to capture some screen with my ps5 but it seems it capture the ps5 output without the Reality Creation of Bravia. If someone know how I can capture shot from the Bravia I will try again because otherwise on ps5 it doesn't works. I assure to you in 1080p games or with aggressive TAA this TV setting is a benediction. Dying Light 2 is definitely more pleasant to look otherwise it's a pain to look.Hard to imagine, can you give us some examples?

Last edited:

you understand wrong and should probably try and have a basic grasp of shit you talk aboutInput latency in ms of the screen not correspond exactly to the ms of the controller input lag, from what I have understood.

coffinbirth

Member

Prove it.You are wrong. Turning on reality creation in game mode (it’s on by default in game mode btw) does not add additional lag.

Whilst you are in game mode, the only setting that adds additional input lag on Sony bravia modern sets is black frame insertion specifically on oled tvs. The setting is under motion settings and is listed as “clearness”.

Source : Myself with many different Sony tvs and leo bodnar lag tester tool.

There are multiple reports of it adding up to 30ms, and anecdotally there is perceivable lag. Also, not on as default in Game mode with x900f, as you claim...in fact, there isn't even an option for "on".

StateofMajora

Banned

I’m not in the habit of making a video to prove people talking out of their ass wrong. I’d be doing that all the time!Prove it.

There are multiple reports of it adding up to 30ms, and anecdotally there is perceivable lag. Also, not on as default in Game mode with x900f, as you claim...in fact, there isn't even an option for "on".

I can guarantee you that you will not find a “source” with video documentation to support these 30ms claims.

If someone respectfully asked me out of genuine curiosity I would consider it.

I have owned x900e. z9f, x950g, a8g, a8h, A9S and a80j sony bravia tvs.

Last edited:

coffinbirth

Member

So, no then. OK.I’m not in the habit of making a video to prove people talking out of their ass wrong. I’d be doing that all the time!

I can guarantee you that you will not find a “source” with video documentation to support these 30ms claims.

If someone respectfully asked me out of genuine curiosity I would consider it.

I have owned x900e. z9f, x950g, a8g, a8h, A9S and a80j sony bravia tvs.

Also, nothing to say about not being able to select it in Game mode on X900F?

Shmunter

Member

From memory 900f has actually downgraded the processing chip and option from 900e. Think it was reinstated on 900g onwards.So, no then. OK.

Also, nothing to say about not being able to select it in Game mode on X900F?

01011001

Banned

I can guarantee you that you will not find a “source” with video documentation to support these 30ms claims.

because noone of the usual reviewers even considers testing for it... all I found was a single guy that tested his new TV about a year ago and gave people info on what does and doesn't add latency to the image. upon someone asking about Reality Creation he said ~30ms of additional lag.

I have no reason to believe that guy is lying, maybe he didn't measure and just gave an estimate, but 30ms is a lot so if it was an estimate he clearly felt it.

Last edited:

StateofMajora

Banned

I have never used the x900f but that doesn’t sound right to me. I know others on this board have that unit so maybe they can chime in.So, no then. OK.

Also, nothing to say about not being able to select it in Game mode on X900F?

Edit : Although as I said I have used A8G which shares the x1 extreme chip in the x900f which absolutely had reality creation in game mode.

Last edited:

StateofMajora

Banned

30ms of additional lag is an absurd claim on its face. That is nearly 2 entire frames of lag… it wouldn’t be “yeah you can kind of notice it maybe” it would be “wow that is a huge amount of lag.”because noone of the usual reviewers even considers testing for it... all I found was a single guy that tested his new TV about a year ago and gave people info on what does and doesn't add latency to the image. upon someone asking about Reality Creation he said ~30ms of additional lag.

I have no reason to believe that guy is lying, maybe he didn't measure and just gave an estimate, but 30ms is a lot so if it was an estimate he clearly felt it.

I am able to notice a 5ms difference in display lag (in certain titles where there isn’t already significant game lag) so a 30ms difference would be like a slap in the face.

Anyone making that claim has obviously nothing whatsoever to back it up, and for good reason.

Shmunter

Member

I play COD on 900e with reality creation. There’s no added lag on or off and I’m pretty astute to these things. E.g. Can’t play Cold War with RT on for example because latency is night and day to me with it on/off - I’m sure many don’t even notice that.because noone of the usual reviewers even considers testing for it... all I found was a single guy that tested his new TV about a year ago and gave people info on what does and doesn't add latency to the image. upon someone asking about Reality Creation he said ~30ms of additional lag.

I have no reason to believe that guy is lying, maybe he didn't measure and just gave an estimate, but 30ms is a lot so if it was an estimate he clearly felt it.

StateofMajora

Banned

Manual is “on”.

Last edited:

01011001

Banned

30ms of additional lag is an absurd claim on its face. That is nearly 2 entire frames of lag… it wouldn’t be “yeah you can kind of notice it maybe” it would be “wow that is a huge amount of lag.”

I am able to notice a 5ms difference in display lag (in certain titles where there isn’t already significant game lag) so a 30ms difference would be like a slap in the face.

Anyone making that claim has obviously nothing whatsoever to back it up, and for good reason.

well you have nothing to back up your claim either. so either way we have no trustworthy info on this

and I have come to mistrust anyone who claims low input latency online, since we still actually have people that will tell you that Killzone 2 felt good to play

I'm maybe biased towards believing people that say something has high lag more than people that say the lag is low due to these examples of obvious latency being waved away by so many people... playing Remote Play on PS4 is another one of those examples. that shit has atrocious lag but you will find people that tell you they don't feel it

Last edited:

StateofMajora

Banned

I have nothing to back it up because i’m not paid to do so lol. Maybe one day.well you have nothing to back up your claim either. so either way we have no trustworthy info on this

and I have come to mistrust anyone who claims low input latency online, since we still actually have people that will tell you that Killzone 2 felt good to play

Trust me I understand and i’m not demanding anyone believe me. It’s just that I don’t respond well to people saying I am full of it when i’m just sharing my information, I don’t have anything to gain from lying.

Last edited:

01011001

Banned

I have nothing to back it up because i’m not paid to do so lol. Maybe one day.

it is a bit weird that noone who is paid to do it tests this. it seems review sites simply test Game Mode with everything off to have the optimal values and don't go deeper.

features like these would be interesting to test so it's kinda disappointing noone does

StateofMajora

Banned

It’s part of the reason I often contemplate making videos myself, but i’m going to be very busy until at least the summer. And I still don’t know how to calibrate which i’d like to learn as well.it is a bit weird that noone who is paid to do it tests this. it seems review sites simply test Game Mode with everything off to have the optimal values and don't go deeper.

features like these would be interesting to test so it's kinda disappointing noone does

coffinbirth

Member

It's greyed out, no option to toggle anything...coffinbirth Actually now that I think about it you may not even understand how the reality creation toggle works ; there is no option for on because it’s either off, auto or manual in which you can adjust the values between 0 and 100.

Manual is “on”.

So, I guess these guys are wrong too, huh?

StateofMajora

Banned

It's greyed out, no option to toggle anything...

So, I guess these guys are wrong too, huh?

There shouldn’t be a difference in an x1 extreme chip oled a8g vs 900f.

They’re probably a PR person and yes probably wrong. X900h I have also not tested. But it’s important to note it doesn’t actually have an additional picture processor like other Sony x1 tvs ; just the mediatek chip. So it could perform differently with regards to input lag.

But my parents happen to have an x85j tv which is effectively the same tv as x900h minus the local dimming (x85j is edge lit). So maybe I will test that out sometime.

Last edited: