No.

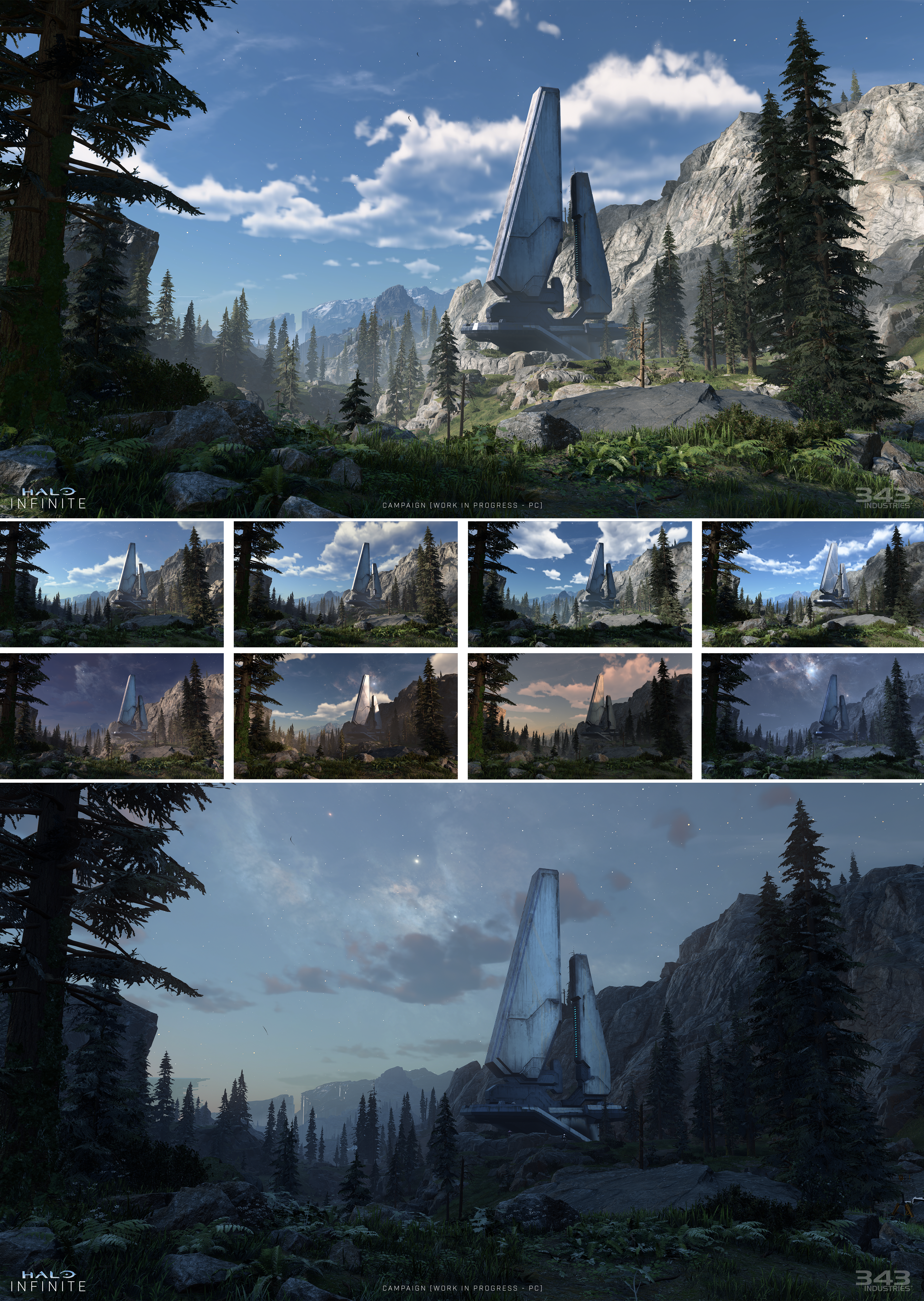

The engine preference has nothing to do with the environment sky lighting equation. Most of these engines are deferred because they need many light sources (tiny) and rely heavily on the depth buffer for screen-space calculations. Considering this is shown on a PC, I'd expect much better lighting than this. If Cyberpunk can implement proper ambient occlusion despite it using RT, then 343 can implement it too. There are way too many papers out there with the information on how to implement good environment lighting to not do it properly. Add DLSS into the mix to get your frametimes and you got your 60FPS easily on even mid-tier GPUs. The XSX is another story, but we are being shown PC images.

That sounds like you are out of your depth technically speaking about the choice of renderer going by your last comment.

For a competitive shooter that relies on accurate zbuffer picking - to determine hit detection - ML upscaling is just a big fat no. As you would effectively be picking at the 720p native resolution, not the 4K one, as you can't ML enhance missing depth buffer values; especially when the values in the native zbuffer are perspectively projected at accuracy that is already way bellow the precision needed to reconstruct accurate positions for shadowmap comparisons - to within metres - as geometry leaves the foreground to the background.

Trying to use ML to create those missing values wouldn't work, and aiming at 720p in the engine, while looking at 4K would not be a suitable solution either because the user has an incoherent view of what they are doing.

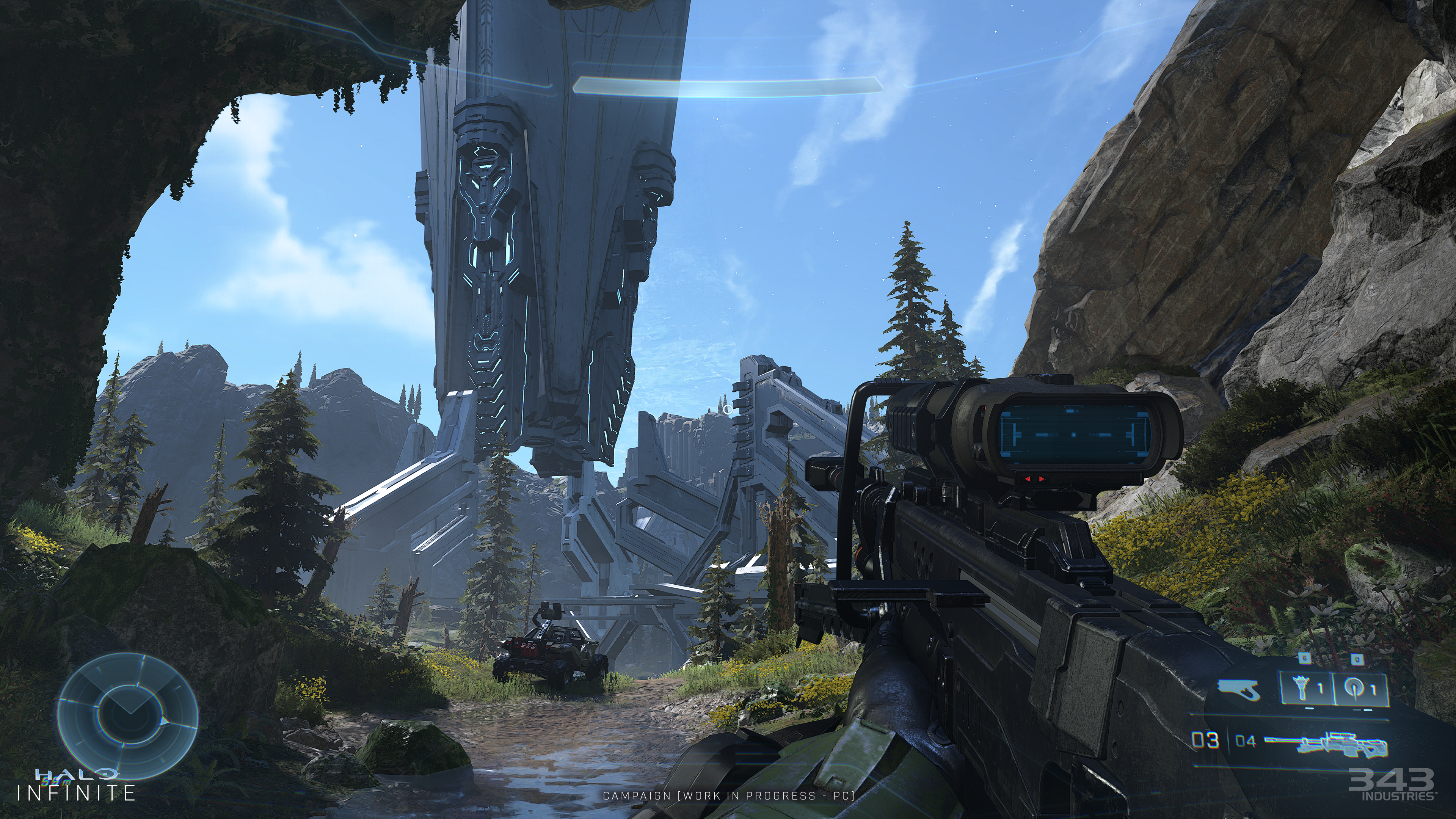

The first set of pictures for this game, screamed Forward renderer going by the artefacts IMO, and these shots still have hallmark visuals of a forward renderer with aliasing that never exists in modern deferred games - and less passes or more rendered passes is a keystone design choice when responsiveness, frame-rate and high native resolution are your game's constraints; especially when you are scaling up from Xbox1.

Most 3rd party modern shooters that target 60fps(for all target platforms) ultimately use a forward renderer - the most recent in memory being that EA used idtech3 as the baseline engine for Titanfall IIRC. And being a VFX artist, you should be familiar with the difficulty of advanced special FX when you have to do things in a single pass - because complex VFX are typically more performant and easier to implement with some recursion to save and share intermediate values between passes, or at final frame construction - in a deferred renderer solution.

If you are correct and they are doing deferred rendering, then the game's artefacts look more like industrial sabotage by employees, than graphical oversights. because 3 or 4 buffers being assembled to construct those final frame images should automatically alleviate the artefacts.

Also IIRC, I thought poor SFS/VRS implementations were supposedly a big contributor to blame the issues with the original reveal. Correct me if I'm wrong on this, but if you are using those two techniques with a deferred renderer, how would they ever be more than 1 frame away from correcting an incorrect LOD of texture, or an inadequate shading rate - when previous deferred results could have been accumulated to hide the issues?